Egocentric Hand Segmentation

Published:

Abstract

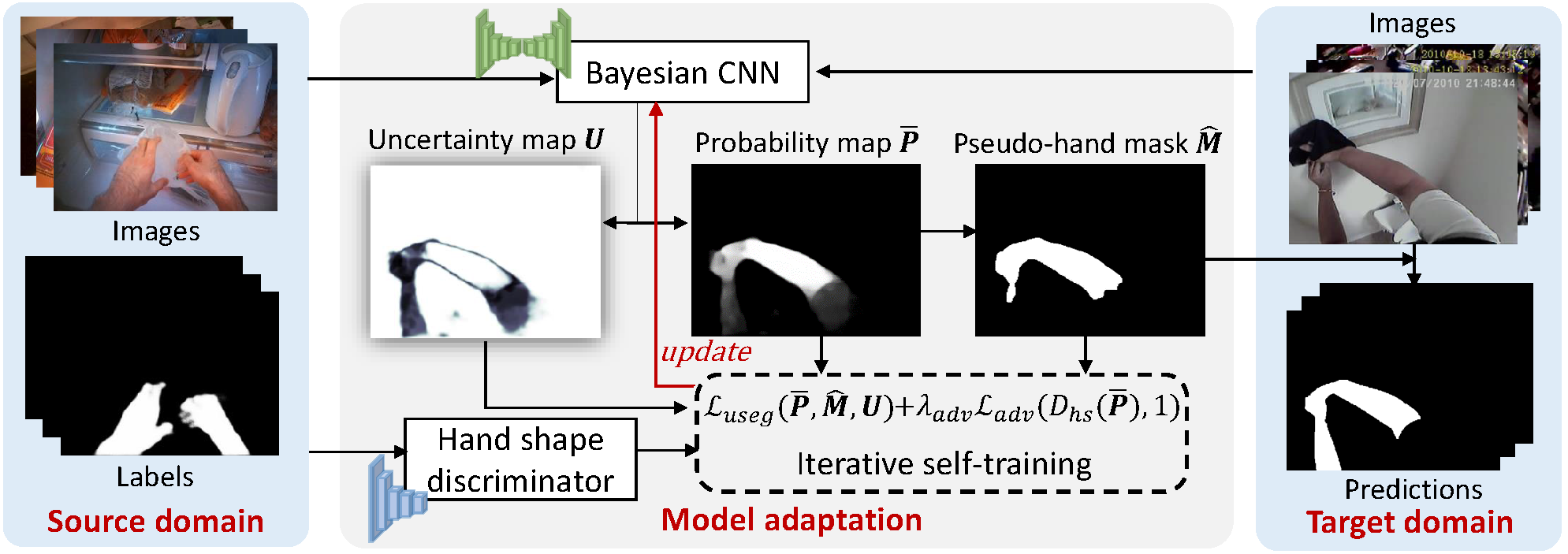

Although the performance of hand segmentation in egocentric videos has been significantly improved by using CNNs, it still remains a challenging issue to generalize the trained models to new domains, e.g., unseen environments. In this work, we solve the hand segmentation generalization problem without requiring segmentation labels in the target domain. To this end, we propose a Bayesian CNN-based model adaptation framework for hand segmentation, which introduces and considers two key factors: 1) prediction uncertainty when the model is applied in a new domain and 2) common information about hand shapes shared across domains. Consequently, we propose an iterative self-training method for hand segmentation in the new domain, which is guided by the model uncertainty estimated by a Bayesian CNN. We further use an adversarial component in our framework to utilize shared information about hand shapes to constrain the model adaptation process. Experiments on multiple egocentric datasets show that the proposed method significantly improves the generalization performance of hand segmentation.

Method Overview

Publication:

M. Cai, F. Lu and Y. Sato, "Generalizing Hand Segmentation in Egocentric Videos with Uncertainty-Guided Model Adaptation," IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2020. (acceptance rate: 22%)

[paper] [slides] [Code]